MC // Thermal Management

How To Choose Between CRAHs and CRACs

Design, maintenance, and sustainability considerations

All data center designs start with a myriad of decisions — what type of cooling system to employ is just one of them. But, it’s a big one because it’s usually where sustainability goals meet budget constraints. So, design engineers usually end up altering their first-pass, ultimate-efficiency specifications in order to make financial sense.

Once data centers become operational, most of the intellectual knowledge about the design disappears — the design and construction teams move on to their next project, and operations teams scramble to get up to speed. In the first year, operations teams need to learn everything about the new equipment and systems plus establish maintenance routines to keep it all running at peak performance. Far too often, they are overwhelmed and preventative maintenance is overlooked, causing performance issues that can lead to equipment failure and, ultimately, downtime.

Numerous data center cooling designs are readily available for owners who are looking to drive sustainability efforts without breaking the bank. These include plenum and ducted returns, hot- and cold-aisle containment, in-row cooling, rear-door heat exchangers, and free cooling, to name a few. Add to all of these choices the thousands of SKUs offered by the major manufacturers, and it’s obvious why design decisions aren’t always straight-forward and easy.

Despite the design, construction, and startup challenges at new facilities, there are some basics that pretty much apply across the board. Let’s look at traditional computer room air handler (CRAH) and computer room air conditioner (CRAC) designs to see how they differ.

CRAHs — As the name implies, these boxes handle air by way of fans, cooling coils, and actuated valves that vary the amount of cooling the unit produces. They use chilled water from a central plant or skid-mounted chiller plant as their cooling-conveyance medium. They aren’t limited by distance or lift and leak detection is easy in these types of systems. CRAH designs are typically more energy efficient, and they provide an inherent ride-through time.

CRACs — These don’t differ much from any other building air conditioner. The indoor units house the fans, cooling coils, and compressors, or they have an outdoor compressor unit. CRACs typically use refrigerant as their cooling-conveyance medium. They are less expensive on a first-cost basis, and they share no single point of failure.

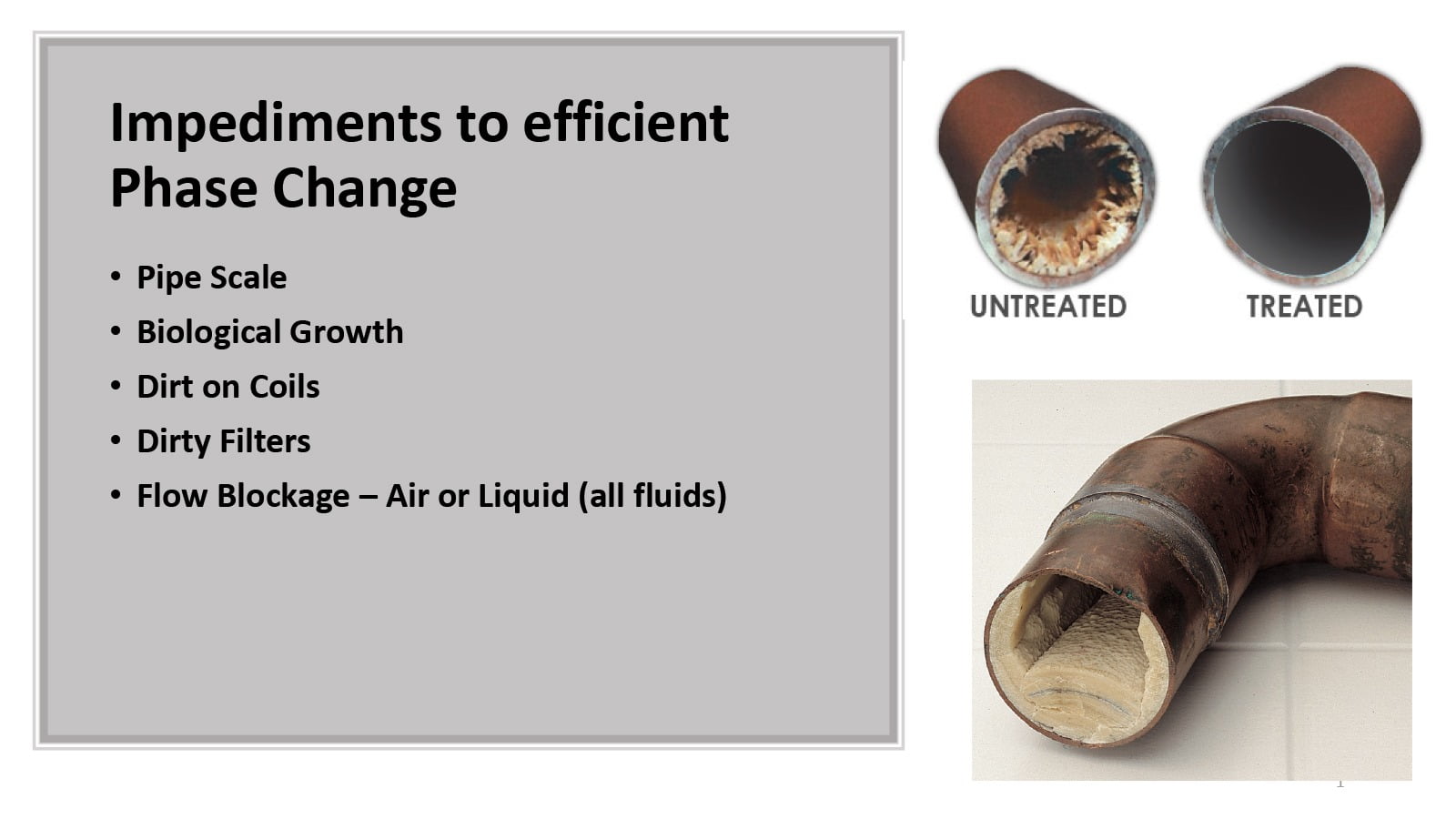

Figure 1: Impediments to efficient phase change.

Photos courtesy of Dennis Cronin

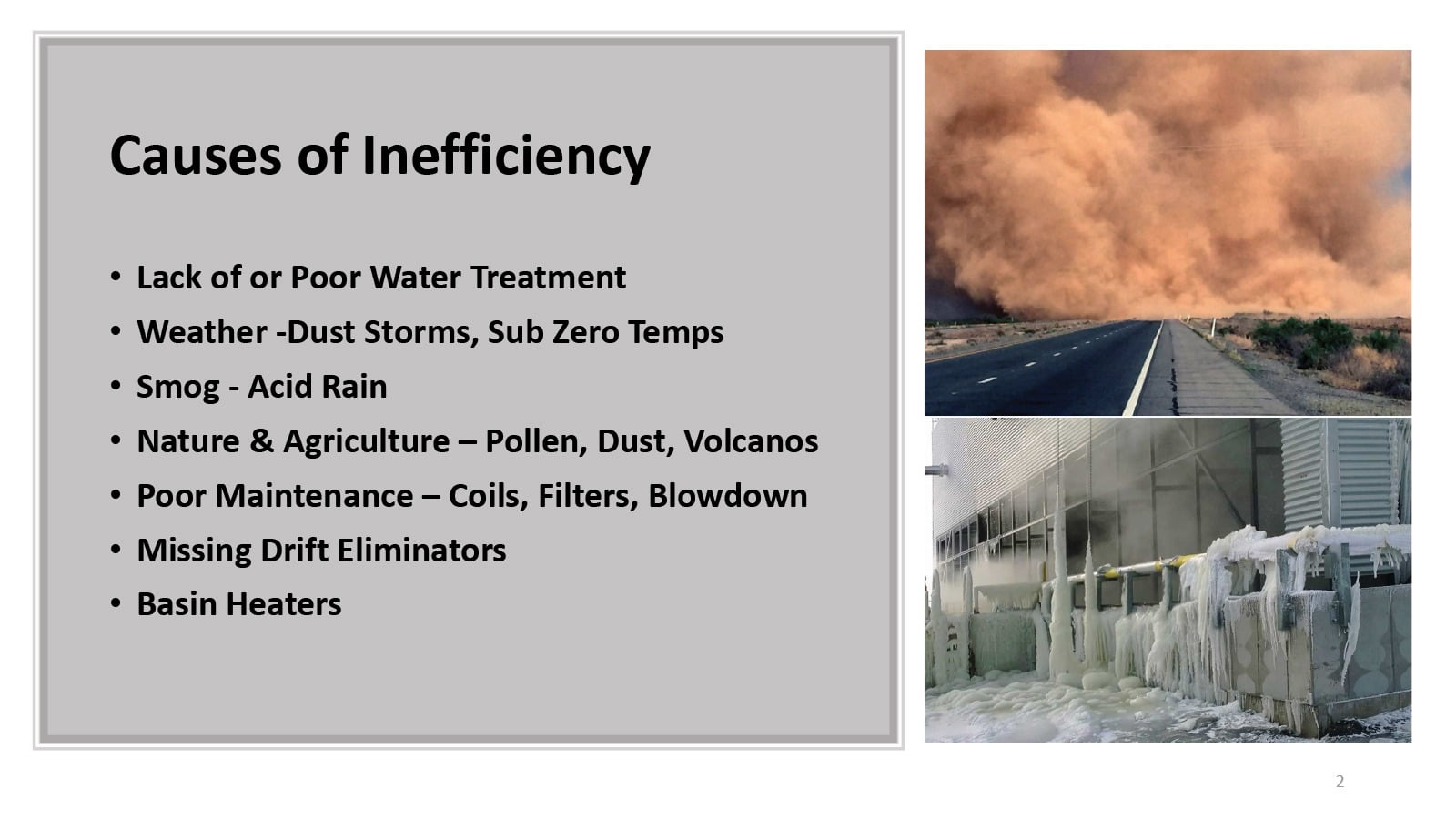

Figure 2: Causes of inefficiency.

In most cases, the data center size will determine whether CRACs or CRAHs are used. CRACs may not have sufficient lift to reach condensing units for data centers in multistory buildings, and piping may exceed their distance limits in warehouse-type facilities. The limitations — 30 to 80 feet in elevation and 100 to 200 feet in distance — can sometimes be mitigated by upsizing the piping, but that adds to refrigerant volume and cost. That’s why large data centers tend to utilize water-cooled HVAC systems.

But, regardless of the equipment ratings or design parameters, efficiency is not guaranteed — it has to be maintained. Both systems lose efficiency if their cooing coils become dirty. Air filters and pre-filters address this, but, eventually, contaminants permeate all surfaces. How fast this occurs is a function of how dirty the environment and how good is the filtration is.

As CRAC systems lose refrigerant, they become increasingly less efficient. At some point, the compressor will simply stop working. If leaked refrigerant reaches a high enough concentration level, it could set off the area smoke detectors, resulting in an emergency power off (EPO) event and firemen trudging through the whitespace. And, even if it doesn’t trigger the alarms, it’s still a costly issue.

CRAH designs with heavy-walled, chilled-water piping is inherently more robust than refrigerant piping, thus the likelihood of a catastrophic failure is rather remote. However, if the water is not properly treated, pipe failure will eventually occur due to microbial attack or scale buildup.

While this is much more likely to occur on the warmer condenser water piping, it has been known to occur on both sides of the compressor circuits.

Figure 3: Clean versus dirty coils.

Building Interior

Everyone talks about the internal data center components to the point that, too often, the external components are completely forgotten. Outdoor components end up with many contaminants landing on them. Just as a car collects dirt and dust if it’s parked on the street for a week, the same is true for outdoor cooling components.

Generally, fins on CRAC system DX coils are aluminum, so they oxidize and become coated with air pollutants. Chilled water systems pose additional challenges because, while cooling towers are very efficient, they draw contaminants out of the air during their cooling process. When this happens, heavier particles precipitate out and land in the tower basin. Others, including biologicals, remain suspended. They are carried back to the pumps and chillers, where they coat the heat exchange bundles or attach themselves to the pipes. Colonies can grow in the warm water of the condenser loop.

The impact on sustainability caused by contaminants in condenser systems is twofold: Not only does it cause efficiency losses in the system itself, but it requires the use of chemicals and additional water to correct.

Data center sustainability strategies are heavily weighted toward the design and build functions, but there is a serious need to provide an equal amount of attention on maintaining what was installed.

Think of data centers like new cars. If you change the oil, rotate the tires and check the alignment, and keep the safety sensors/cameras clean, the car will last well past 100,000 miles. But, if you leave the car dirty, regularly skip the maintenance, run the tires bald, etc., you can’t ask why you’re stranded on the road … you know why.

Dennis Cronin

Dennis Cronin is CEO of DCIRN for the Americas region.

Lead Image courtesy of